How Motivated Reasoning Shapes How We View the World

And what can we do to be a tad more objective.

Imagine the following scenario: you're with your friends, discussing important things. The conversation steers toward an issue that's dear to you, of which you're also knowledgeable about. Say it's the impact of livestock on the planet (or feminism, or whatever else that keeps you awake at night). You have the facts - factory farming:

is responsible for a gargantuan land loss,

leads to water and air pollution,

is a big driver of climate change.

Besides the facts, you're also (obviously) on the morally correct side: animals grown for food are living in horrendous conditions only to be slaughtered at the end of their short and miserable lives.

To undergird the environmental and moral arguments - because duh, they aren't enough to persuade the meatheads at the table - you can also discuss, at length, how eating meat is obviously bad for you: it was found to be associated with cancer, diabetes type 2, and coronary heart diseases, (and also makes you look ugly, just because).

Your victory is inevitable. You covered all the bases and the animal-eating persons at the table are bereft of any coherent arguments.

But one of them whips out this one YouTube video that debunked many of your claims: Livestock is not that bad for the environment, it says. Heck, some practices like regenerative farming, are even CO2 negative! - they suck out CO2 from the atmosphere instead of adding it. The video also says that most of the water used to grow the titanic amounts of feed the livestock consumes is green water - not drinkable by humans. The arguments against meat begin to unravel.

Or are they? Turns out, there’s a debunking of the debunking, a debunk-ception, if you will. This, of course, prompts another round of discussion, and on and on it goes.

What do you think: are the people present at the table trying to zero in on the approximate environmental impact of factory farming? Or are these people trying to defend their beliefs, life choices, and "I'm a good person" identities at any cost?

Without having a rather pessimistic view about human nature, I'd say that most of the discussions about thorny issues - such as factory farming, feminism, or gun laws, that stem from systemic path dependencies, warring motivations, conflicting attitudes, moral sensitivities, and gazillion of other factors - are not about trying to find out the truth. They are trying to justify arbitrary moral positions via motivated reasoning.

What is motivated reasoning, you ask? In her book The Scout Mindset, Julia Galef likens motivated reasoning to the soldier mindset. The analogy goes as follows: As a soldier, your main objective is to defend your side's position at all costs because you deem it important. To do that, you might systematically - and mostly unconsciously -tilt the truth to find the evidence you require. You know how it goes. If the first five Google results kind of sort of support your intuition, you’re convinced that your argument is valid. If not, well, the world hasn’t reached the state of enlightenment as you have.

As a soldier, you (implicitly) believe that a certain amount of motivated reasoning is necessary to keep up morale, for self-esteem, comfort, or belonging. The questions that soldiers ask themselves are: can I believe it? and must I believe it?

In the example above, acknowledging the issues associated with factory farming as an omnivore would imply not acting on a moral, environmental, and health-related issue, which any intelligent and good person would do1. Since the issue is complex enough, the question of whether one must believe the presented evidence starts tilting toward “no”.

Is there an alternative to motivated reasoning? Sure there is, Galef calls it the scout mindset.

As a scout, your chief objective isn’t to defend your position at all costs, but to find the truth, regardless of how unappetizing it is. The scout is, by necessity, remote from salient identities: he or she must report the situation as it is, not as they wish it to be.

So, if the accumulated evidence starts to point to the fact that factory farming is net-negative in terms of the environment, health, as well as morality, the scout at the table would give such a position more weight, regardless of whether she eats animal products herself or not.

The question that guides scout’s reasoning: is this true?

As a further example, consider the Tweet of Bethany Brookshire:

Monday morning observation:

I have “PhD” in my email signature. I sign my emails with just my name, no “Dr.” I email a lot of PhDs.

Their replies:

Men: “Dear Bethany.” “Hi Ms. Brookshire.”Women: “Hi Dr. Brookshire.”

Of course, with the gender equality debate raging everywhere around the internet, the Tweet went viral. And understandably so: if the Zeitgeist is to uncover all the subtle inequalities between men and women, then this is an important piece of evidence that supports the intuition that women’s achievements are not being recognized.

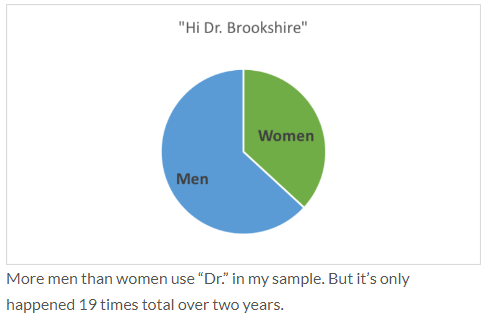

The intuition was also false, though, as Bethany herself discovered when she went through her emails and counted the instances of “Dr.” salutations from both men and women:

Now, the point of mentioning the anecdote isn’t to show there aren’t systemic gender issues. I’m quite sure there are and we ought to act on them whenever possible. Rather, the point is that our heads are filled with beliefs, attitudes, and inklings that skew how we perceive information.

But of course, you’re a smart person and know that, right? You’re aware that you’re biased and are quite confident that your arguments and the evidence that supports them are built on a solid basis, thank you very much.

I hate to burst your bubble but even if you’re aware2 of the phenomenon, you probably don’t even begin to grasp the extent of its influence on your reasoning. It reminds me of the opening of the commencement speech by the late David Foster Wallace:

“There are these two young fish swimming along and they happen to meet an older fish swimming the other way, who nods at them and says "Morning, boys. How's the water?" And the two young fish swim on for a bit, and then eventually one of them looks over at the other and goes "What the hell is water?”

So, let us continue supposing that motivated reasoning is something that's happening 99% of the time and we're pretty much blind to it, what can we do? If you think a bit like me, you might be persuaded by the following thought:

“Oh, I think that [insert a random belief I strongly believe in] is totally true. But knowing what I know now about human reasoning, "totally true" is probably an overstatement that I assumed at some point for convenience, comfort, belonging, whatever. How do I balance the scales a bit? Oh, I know! I can expose myself to the other side of [insert a random belief I strongly believe in]! That'll help me to understand the other side and have a more nuanced understanding!"

Such a thought is, in my humble and totally unbiased opinion, laudable and I welcome you to the club - it's at least the two of us now! If you also thought that that's how you can change - or at least adapt - your beliefs, we're on the same ship. Sadly, our ship is Titanic... Why? Turns out exposing people to the polar opposites of their views doesn't make them understand the other side. It makes them even more polarized. And, funny enough, the smarter the people are, the more polarized they become.

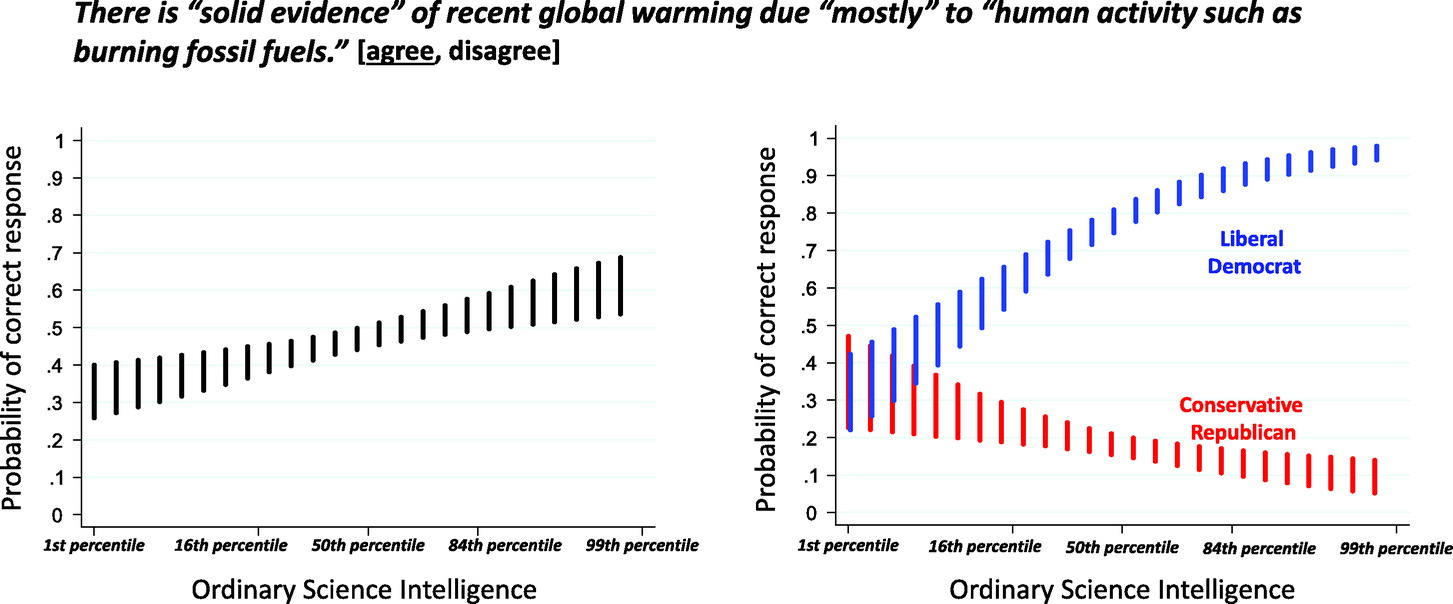

Galef cites a 2016 study by Dan Kahan. In it, Kahan gave people statements (like the ones below) and asked them to rate them as truthful or not. He then measured their political affiliation, religiosity, and “scientific savvy” with the Ordinary Science Intelligence scale (OSI)3.

As you can see by the scissor-like pattern, scientific savviness biases individuals toward their salient identity: High-scoring OSI individuals were biased toward their political leanings on the question of human involvement in global warming (top right). Similarly, high-scoring OSI individuals believed in evolution based on their religiosity levels (bottom).

In other words - and at the risk of generalizing from one study too much - the smarter you are, the more likely you are to be biased. My own humble theory is that the more intellectual funk you have going on:

the more likely you are to convince yourself that your arguments are valid (because you collected a lot of evidence, reasoned it through extensively, whatever) and;

the more likely will others defer to your judgment as a "smart" person ("John is smart, he knows what the issue XYZ is about.").

Knowing about the psychology crisis, it wouldn't hurt to take the edge off the above findings a bit: it's a western, Americanized experiment that pertains to their (from my perspective) weird system of governance that puts people into two buckets (both in terms of political beliefs - liberals and conservatives; and religion - religious vs. atheists).

Still, I believe that there's some intuitive truth in it: the other day I tried to read a feminist manifesto with the intention to understand it better and ended up discarding it altogether since the opinions differed too much from what I believed in (also, honestly, the fact that the literature often reads like a piece from a postmodernist essay generator doesn't help either).

So, while exposing ourselves to opposing views might be laudable, it is woefully inefficient in turning the absolutist beliefs into their more nuanced versions because the distance between the two positions is too great.

But that’s not what you want anyway, right? Not many of us are looking to completely upend our basic understanding of reality, disregard inclinations, and ultimately change affiliations. What we’re looking for is a bit of nuance, a somewhat clearer understanding of complex phenomena. Often, this will look like a middle ground between two extremes.

Where can this middle ground be found?

In adopting the standards and rules of communities with high epistemic standards.

In constantly testing our beliefs and adapting their likely truth value using thought experiments.

Let's dig into each. But before that, another comic strip.

High Epistemic Standard Communities

I think there's something to the old piece of wisdom that we are the average of the five people we spend the most time with. What does this mean in terms of scout or soldier mindset? The group you surround yourself with - and the norm they consciously or unconsciously imprint on you - might be somewhere on the dimension between (soldier) motivated reasoning and (scout) accuracy-based reasoning. At the risk of oversimplifying the issue too much, I'd say that anywhere, where the main axioms can't be questioned, will lean toward the soldier mindset. Think about religious groups, conspiracy theorists, or cricket fan clubs.

On the other side, you have sites such as LessWrong, Effective Altruism, and subreddit r/FeMRADebates, where you can discuss various issues without fear of recrimination. As long as you argue in good faith (and assume that others do so as well), don’t generalize your opinions (“all men are disgusting pigs”, “all women are stuck-up bitches”), and support your claims by evidence, anything goes. Staying with the r/FeMRADebates, those are actually the rules. And not only are stated but people are also being called out when they aren’t following them.

For instance, the user MintyAqua recounts his struggle with being a black man, and how he feels that he’s unjustly bunched together with privileged white men by some feminists:

[…] While I acknowledge that there are some feminists tackling the topic of race, most notably intersectional feminists, is still feels like a massive blind spot. Intersectional feminists regularly argue race issues from women's standpoint. I realize there are some feminists that argue this is a patriarchal issue and they seem to argue it in good faith that men are just as much victims of patriarchy as women are, but I'm not seeing it. I expressed these feelings to a female feminist friend and she said it's just patriarchy and men being placed in boxes. She names the problem but gives zero solutions. She blames patriarchy but it's women that told me not to cry at my own fathers funeral and that I had to stay strong "for everyone else". It was women that said my worth was in my penis. It was women that judge me because I'm less than 6 foot tall. And yet it's men that are the most understanding of my concerns and issues and I'm seen as a bad person when I say I'm not a feminist. My friend stated that women uphold patriarchy just as much as men, but isn't that a little convenient? I feel like these big sweeping things like patriarchy are only held in place arguments to keep women from accepting responsibility for their actions. "Yes you were abuse and assaulted but really it was the patriarchy and therefore men who you should actually blame." Why would any man want to sign up for that? Please humor me.

What you’ll first see if you skim through the thread, is the commentary by the mod:

This thread was reported and Sandboxed (by locking) for Insulting Generalizations (Rule 2) against both feminists and men. So that we can unlock your post, please edit it to:

Acknowledge that many feminists do focus on racial issues, and that you should have said some feminist arguments have these blind spots.

Remove or dial back the insulting generalization about men viewing women as property / being unable to care for themselves. "Many" does not adequately acknowledge diversity in a group - see our Rules Examples for more about what is and isn't allowed here.

EDIT: revised and unlocked; updated link

And comments such as these:

I try to tell people not to let dipshits ruin feminism for them, but I understand if it feels personal for you. Admittedly, black men’s issues is extremely low on the priority list for most feminists, and I’d like to see that resolved. I had heard about the sexualization of black men and boys, but reading this makes it painfully more real to me, and emphasizes how I’ve been neglecting consideration on this. Thank you.

You really do sound like an intersectional feminist in this post, btw. Like, I could see an intersectional feminist making these exact same critiques.

Now, I wouldn’t consider myself a feminist exactly because I feel the term encompasses too much, yet misses a lot as well. Like veganism, the term has baggage despite best intentions. Nevertheless, I’m glad the issues are being acknowledged and discussed. Anyway, the point? Instead of hurling missiles from the trenches like soldiers, people in places like r/FeMRADebates scout the landscape and try to form the most accurate picture of it because that’s what the community, and the rules that uphold it, are about.

You can apply some of the rules in the discussions with your friends. Whenever someone makes a sweeping generalization, call them out on it. When someone starts saying we should focus more on X because this person feels strongly about it, ask them for evidence. For instance, in another discussion in r/FeMRADebates, you can read that men are raped at the same rate as women. Why, then, do I get the sense in many discussions that women deserve - and get - more care when it comes to these issues?

Anyway, what if you don’t care so much for discussions and want to improve your own reasoning? That’s where thought experiments come in.

Thought Experiments to the Rescue

Galef mentions five common thought experiments that you can use to test whether you're likely under the influence of motivated reasoning.

The Double Standard Test: Are you judging one person (or group) by a different standard than you would use for another person (or group)?

Sometimes, we need a bit of perspective before we judge. Galef recounts a story of a military cadet, Dan, that believed the women in the military high school were all "stuck-up bitches" because they didn't give him any attention. What he didn't consider whilst wallowing in his misery is that there were 250 boys and 30 girls in the school, so the girls had plenty of choices. After doing the double standard experiment, he could see why girls didn't give him (a socially awkward) fellow much attention: there were plenty of other - more delicious - fish in the sea. And had he been in the same position as the girls, he’d probably act the same.

The Outsider Test: How would you evaluate this situation if it wasn’t your situation?

Sometimes, we're stuck in an unenviable situation because we’re invested in it - either through time, money, or energy. Think of a relationship, job, whatever. What this test asks us to consider is what an outsider without any baggage would do: Would they put up with your partner's tantrums or an egoistic boss?

To illustrate, Galef recounted a story of Intel, the computer giant. At that particular time, Intels’ identity - and with it probably a lot of assets - was all about memory chips. Soon, however, the Japanese came up with a better method to manufacture memory chips and Intel was faced with an existential challenge.

Grove, one of the founders, writes in the biography:

Our mood was downbeat. I looked out the window at the Ferris wheel of the Great America amusement park revolving in the distance, then I turned back to Gordon and I asked, “If we got kicked out and the board brought in a new CEO, what do you think he would do?” Gordon answered without hesitation, “He would get us out of memories.” I stared at him, numb, then said, “Why shouldn’t you and I walk out the door, come back and do it ourselves?”

Sometimes, you need to “walk out the door” ourselves and get a fresh perspective. The Outsider Test will help you do that.

The Conformity Test: If other people no longer held this view, would you still hold it?

Sometimes, we believe things because others do. In high school, I had a very convincing friend that was very opinionated. So, when he shared his theories about the world (most of them including conspiracies and spiritual things), I mostly nodded, listened, and picked up a thing or two. When I pushed back on some things, he was mostly able to persuade me (since I was pretty much a tabula rasa back then, this wasn't all that difficult). And so it came to be that even now, 10 + years later, I still somewhat believe some of the things we discussed back then, with no firmer basis than a word of two snotty teenagers exploring their naive worldviews.

Similarly, I think many of us also base our opinions on authority or social consensus. And while these are usually helpful heuristics, they can also hamper your progress. The conformity test would help you test this assumption.

The Selective Skeptic Test: If this evidence supported the other side, how credible would you judge it to be?

Sometimes, we are too harsh or permissive, depending on our attitudes and beliefs. For instance, if you showed me a study that claimed to find that men perceive women literally as objects, you bet I'd take a closer look at the study and its rebuttals: it's controversial, a bit out there, and it smells of scientism because the researchers seem to use the scientific method as a means to confirm their beliefs. In short, they act more like soldiers than scouts.

But if you showed me an anecdote in some random backwater Tumblr blog saying that agribusiness is the root of all evil, I'd smile, nod, and feel validated in having the opinions I have already.

To get a clearer picture, as per the Selective Skeptic Test, we should treat both the confirming and the conflicting evidence the same.

The Status Quo Bias Test: If your current situation was not the status quo, would you actively choose it?

Sometimes, we are stuck in a Status Quo because we have a propensity for inaction. The Status Quo Bias Test helps to counteract this propensity by making us think about whether we’d act to be in the same position we are now. Would we actively choose the circumstances - job, relationship, hobbies, whatever - we have? Or have we become wound up at some point like a toy car, and most of what we do is based on inertia?

These tests aren't a silver bullet but they're a start. I think we'd all profit from a bit of epistemic hygiene. What are the beliefs that you think are unquestionably true? What are the axioms that you don't question so that you can go on living the way you do? What are the bounded rationalities that govern - and skew - your understanding of the complex situations around you? You can use some - or all - of these experiments to establish how firm the basis for your beliefs is, and whether some of them need adapting.

Conclusions

The most insidious thing about motivated reasoning is that we're really good at spotting it in others. But suck big time seeing it in ourselves. The consequence is that we're walking around as the infallible Gods of Objectivity, while everyone looks to be sophisticated-looking, yet still a biased primate (something tells me that the psychological training will make you perceive others like that anyway).

Adapting beliefs or being wrong is thus something for others, but not for us. But if anything, Galef's argumentation made it very clear that knowing about biases, being smart, or being an expert in something, don't help as much as one would think in terms of the accuracy of one's beliefs. In fact, they can pretty much lead to the opposite: overconfidence, hubris, and a career as a wine sommelier.

Am I exempt from the biases above? One part of me says of course! Reading an entire book about motivated reasoning + having psychology training and I'm pretty much the God I described above. Yet, the humbler part of me - which I hope will take over more often than it does now - will help me calibrate my existing beliefs. Assuming that the search for truth and coherence is meaningful, being more of a scout than a soldier should be as well.

which, of course, all the persons at the table consider themselves to be.

How exactly are you aware, by the way, on the dimension between expert-level awareness of “I studied this, read books and give workshops on this, and I train others to recognize it” to “oh, I once read an article about confirmation bias on Vox. Interesting stuff.”?

Some example items:

Facts: Lasers work by focusing sound waves. [True or False]

Methods: A doctor tells a couple that their genetic makeup means that they’ve got one in four chances of having a child with an inherited illness. Does this mean that if their first child has the illness, the next three will not? (Yes/No)

Quantitative Reasoning: If the chance of getting a disease is 20 out of 100, this would be the same as having a _% chance of getting the disease. [open ended: 20 or equivalent]

Cognitive Reflection: If it takes 5 machines 5 min to make 5 widgets, how long would it take 100 machines to make 100 widgets? ___ minutes [open ended: 5]

![[later] I can't believe how bad corruption has become, especially given that our league split off from the statewide one a month ago SPECIFICALLY to protest this kind of flagrantly biased judging. [later] I can't believe how bad corruption has become, especially given that our league split off from the statewide one a month ago SPECIFICALLY to protest this kind of flagrantly biased judging.](https://substackcdn.com/image/fetch/$s_!Zhqs!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fbucketeer-e05bbc84-baa3-437e-9518-adb32be77984.s3.amazonaws.com%2Fpublic%2Fimages%2F89d49103-7db7-4f14-88e9-3bc1a5468ed1_302x392.png)