Angler Fish Are Invading Psychology

An investigation into psychology's guiding metaphor, spiced with odd animal-mating analogies.

Hello, people of the internet. This one might be a bit too dense to chew through without a background in science (and psychology) but you can try regardless. If you don’t understand something I encourage you to read on - it’ll get better (and funnier) as you read on.

TL;DR: psychology lives under the assumption that we are solving our issues but actually, we aren’t. That is because we are still operating within the same, context-naive paradigm. Any meaningful advances will require finding out how to account for context.

The rest is just weird analogies…

Psychology hasn't made much progress in the last few decades, despite the increasing number of positive findings. If you look at the graph below, you’ll see that there’s way more publications (more dots toward the later years in the graph), but we haven't made any meaningful progress in predictive power (the horizontal line is flat).

Of course, this is only one measure of progress1. Still, if we assume that psychology is an empirical science, which it claims to be, this is troubling: there seems to be no reason to collect all this data if we’re not improving our predictions. We're basically heaping grains of sand on top of each other, only to say that we now have a much bigger pile of sand. But something resembling a castle (i.e., a theory that ties all the grains together) is in short supply. A toddler might be happy with this - a bigger pile of sand is much more awesome - but for me, being a tad more sophisticated than a toddler, I'd expect something more.

Also, whatever tentative towers, portcullises, murder holes, ramparts and other castle parts we might’ve molded from our pile of sand so far, they're often kicked down by a nasty bully. Their name is Jake, they identify as a ghost of undefined gender, and they manifest in mysterious ways (gender-fluid ghosts tend to do this). Sometimes, Jake assumes the form of a failed replication and revels in the despair of the original study's researchers2. Sometimes, Jake possesses other guys to write papers, like this one, claiming (very coherently for a ghost!) that the concepts in psychology don't generalize (possibly because they are wobbly a.k.a my penguin is not your penguin ). And then sometimes, Jake gets really poltergeist-y with Excel spreadsheets, conjuring up fake data3. Then, because Jake is not above the usual bad scientific practices, they enjoy some good-natured harking and p-hacking from time to time. Although less so lately, because people are onto them and came up with the concept of Open Science4. And then sometimes, Jake possesses random internet guys who question the questionable quality of our questionnaires and famous paradigmatic "theories". In short, one reason our sandcastle doesn’t grow is because of Jake.

Now, there are efforts to improve the situation (i.e. containing Jake). As mentioned in the previous paragraph, we've developed and implemented the concept of Open Science, which helps keep honest researchers in check5, and which helps expose fraud6. This is done through various standardized practices, such as pre-registered protocols in which researchers commit to their hypotheses, sampling schemes, and statistical procedures. These are available for anyone to see, and as long as the Internet - and the website - are up and running, anyone can grab the dataset and follow the steps to hopefully reproduce the results. Then we have registered reports to better align the incentives of journals and researchers. Basically, a journal agrees to publish the study at the design stage, regardless of what the results turn out to be. Compared to standard studies, which have 96 % positive results, studies published through a Registered Report sport only 44 %7.

Then we have methodical practices like:

(numbers from 1 - 5 indicate my subjective belief in how adopted this practice actually is; 5 being super adopted, 1 being none to token effort, basically just treading water)

making sure our samples are representative of the population. 2

making sure that, before we posit some universals, we actually test more than WEIRD populations (Western, Educated, Industrialized, Rich, and Democratic) ~ 2

doing more theory testing8 (not 8, that’s just a footnote. The rating here is 1)

Statistical practices like:

Calculating power a-priori, which lets us choose appropriate sample sizes. 5

Using more comprehensive, multilevel models that include a wider range of variables. 4

Embracing Bayesian statistics and taking priors seriously instead of as “too much subjectivity” 2 (unsure)

Writing practices like:

making sure we don't overdraw our inferences beyond what the data supports. 1

constraints to generality statements and limiting the “hype” lingo that makes the results more interesting than they are (for a

fun readan angry rant about that, read my article here. Also: ~ 1)actually abandoning writing where writing is not the best medium to convey the study - i.e. adopting scientific videos, VR programs, etc. as alternative mediums. 0?

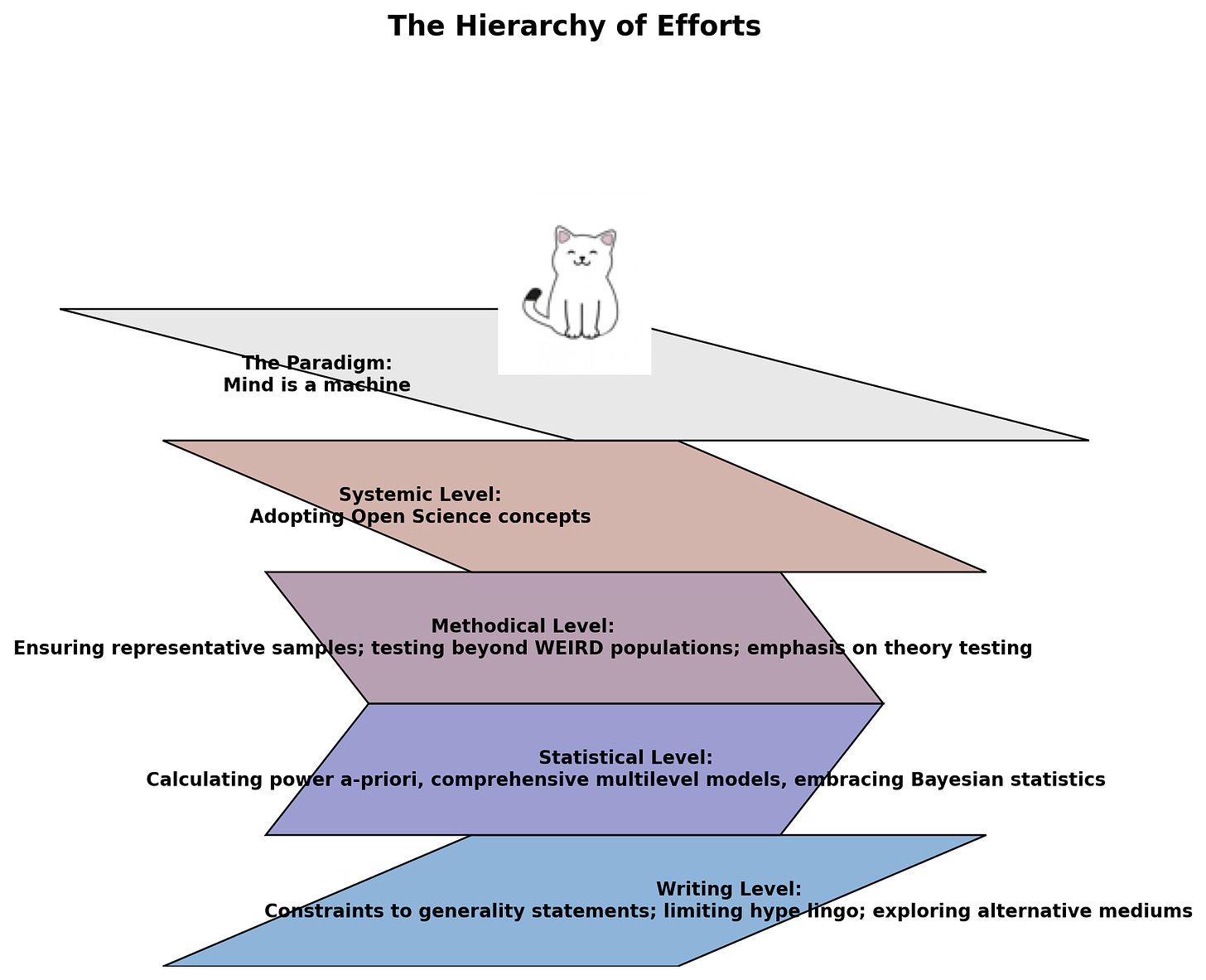

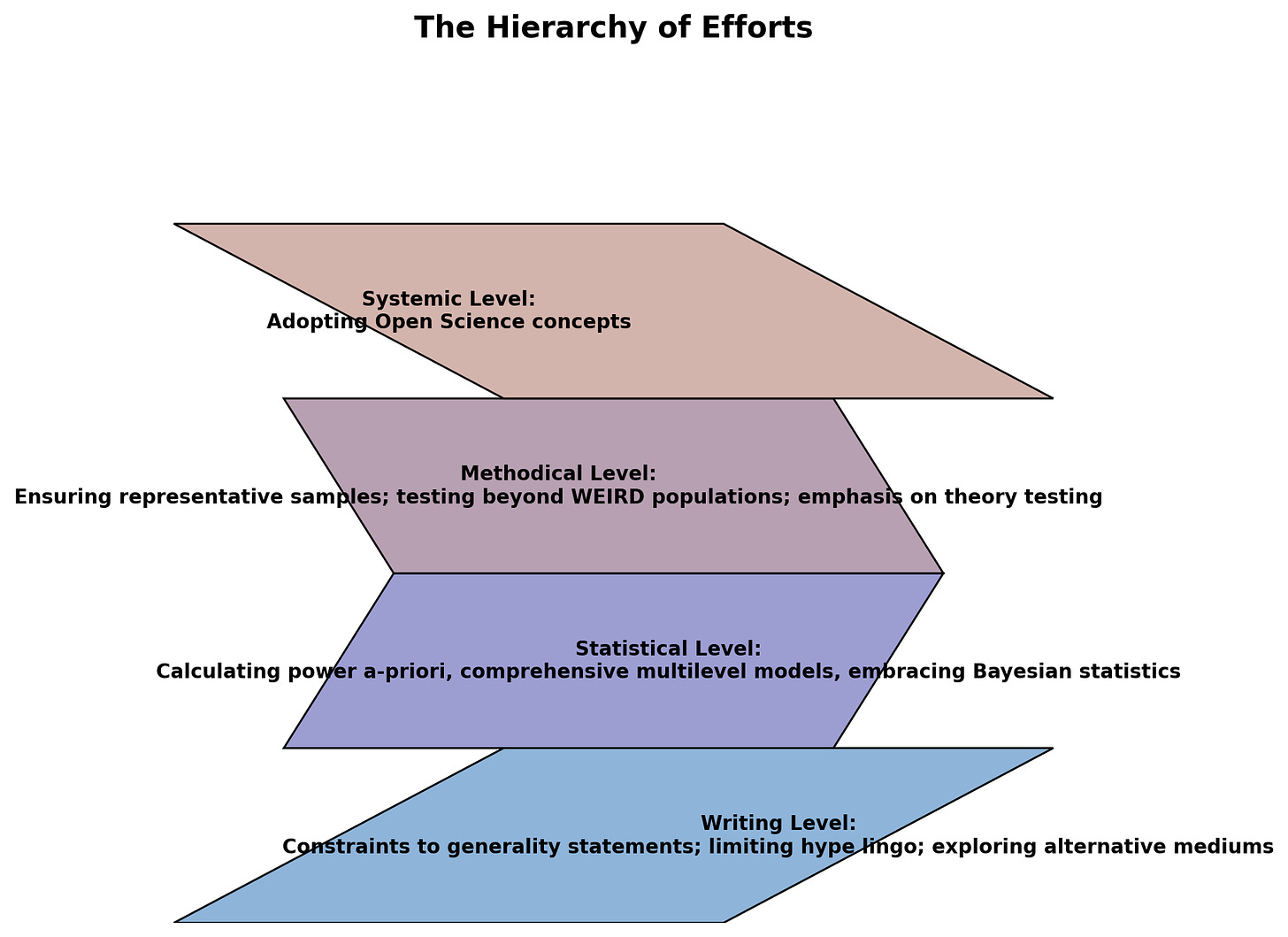

And many more. Now let’s imagine it as a the “Hierarchy of Efforts”:

(Now this, ladies and gentlemen, is what happens when you use ChatGPT’s python coding function and tell it to plot a pyramid. I found it too hilarious and won’t spoil the fun by creating a “proper” pyramid (also I’m lazy and it actually fulfills the purpose, kind of9)

Now consider: it's a grand assault on all fronts, Normandy-like (I don't know anything about Normandy, but it sounded appropriate); we're trying to improve the field on almost all levels of the ... geometric shapes (not a pyramid), but unfortunately this grand assault is attacking the wrong beach (Normandy was a beach, right?). That's because we don't touch the top of the pyramid, the golden goose, the paradigm that perpetuates itself.

The “Scarring You For Life with Weird Analogies” Section

Here's where we take a look at metaphor to take a stab at guessing why that is. You see, a metaphor is a way of understanding something by comparing it to another thing. For instance, a DYSON AIRBLADE is kind of like a tiny tornado for your hands.

Of course, the DYSON AIRBLADE isn't a tiny tornado for your hands, but you get the point: tons of wind = dry hands. This example is trivial, of course, and doesn't really drive the point home10. But consider this: most - perhaps all! - that we understand comes by way of metaphor. Here are some less trivial examples, taken from the excellent Metaphors We Live By:

ARGUMENT IS WAR. This metaphor is reflected in our everyday language by a wide variety of expressions: Your claims are indefensible. He attacked every weak point in my argument. His criticisms were right on target. I demolished his argument. I've never won an argument with him. You disagree? Okay, shoot! If you use that strategy, he'll wipe you out. He shot down all of my arguments.

Or

HAPPY IS UP; SAD IS DOWN I'm feeling up. That boosted my spirits. My spirits rose. You're in high spirits. Thinking about her always gives me a lift. I'm feeling down. I'm depressed. He's really low these days.

Or

THEORIES (AND ARGUMENTS) ARE BUILDINGS Is that the foundation for your theory? The theory needs more support. The argument is shaky. We need some more facts or the argument will fall apart.

The way we, for instance, approach argument (as a war) guides everything about any argument-related situation: if we see argument as war, we quibble to defend our position, attack others' arguments, and so on.

Now back to psychology. I'd say the guiding metaphor is MIND IS A MACHINE, or MIAM for short (sounds like a cat, doesn't it?). Not only does it come up in common expressions, like these:

My mind is racing.

I'm a bit rusty today.

Let me process that.

I'm trying to get my mind in gear.

I've got too many tabs open in my brain right now.

In the "science" of psychology ( = quantitative psychology = most of psychology), MIAM leads to what Feldman-Barrett calls the mechanistic mindset. Now the main assumption11 that flows from this mindset is this:

Psychological events or entities (i.e. the main currency in psychology) are stable and intrinsic to the organism (i.e. there is something - perhaps in your neural substrate - that is your personality). Context is a nuisance variable and, crucially, somewhere outside of a person.

Now before we delve into the intricacies of the mating ritual of angler fish (yes, it's coming, read on!), it's crucial to define what I mean by context, because it's such a vague word. Here:

The context of a person’s experience and acting is everything that, if changed, changes this experiencing and acting.

And

Psychological processes are determined by indefinitely complex and irreversibly changing contexts.

Emphases mine.

So what does this mean? Well, the first part, in my understanding, means that context and person are basically one unit. Without the context, no psychological processes or experiences would manifest the way they did (i.e. in a questionnaire, in an observation, in an interview, etc.). The biology of the brain - and whatever psychological event it creates - is directly tied to the environment12. Or in other words, context and person are so intertwined that you can't disentangle them.

Like a wolf that has just mated, you can't pull the context out of a person... For ~ 30 minutes or so. So we have to get even more drastic in this analogy. But before I scar you for life, here's a BBC video of wolves mating, which is cute and hilarious and educational and a much-needed break from this dense article!

Ah, wholesome.

Anyway, this is what we actually going to go with as an analogy: context and person are like male and female angler fish!

Here's Wikipedia:

When a male finds a female, he bites into her skin, and releases an enzyme that digests the skin of his mouth and her body, fusing the pair down to the blood-vessel level. The male becomes dependent on the female host for survival by receiving nutrients via their shared circulatory system, and provides sperm to the female in return. After fusing, males increase in volume and become much larger relative to free-living males of the species. They live and remain reproductively functional as long as the female lives, and can take part in multiple spawnings. This extreme sexual dimorphism ensures that when the female is ready to spawn, she has a mate immediately available. Multiple males can be incorporated into a single individual female with up to eight males in some species, though some taxa appear to have a "one male per female" rule.

So, context basically merges with a person so completely that they become a singular unit, and disentangling them both is impossible.

Anyway, let's address the second part of the context definition:

Psychological processes are determined by indefinitely complex and irreversibly changing contexts.

Indefinitely complex basically means, in experimental terms, that there are approximately (estimate accurate to a tenth of a decimal place) BAJILLION contextual variables that all meaningfully (albeit weakly) influence psychological processes. Irreversibly changing means you can't un-experience or un-act something. How this relates to the concept of replication, I shall discuss in a bit.

So context, far from being a nuisance variable, is causative - or at least a major contributor - to psychological events and behaviors. It's also for all intents and purposes completely uncontrollable and chaotic, i.e. beyond what most psychological models can account for.

MIAM Meows The Irreconcilable Issues in Psychology

Now, MIAM and mechanistic mindset it engenders in researchers, have implications for literally everything we do in psychology - how we set up our experiments, what data we gather, how we interpret it, and how we think about it. And it also determines the efforts we direct at mitigating the problems I outlined above.

So let's imagine MIAM sitting on top of our pyramid jumble of geometrical shapes, like so:

Misguided assumption #1 - the need to replicate the unreplicable

Let us consider the concept of replication as an example. The only reason we make such a big hullaballoo about the replication crisis in psychology and proceed to invest ungodly amount of time, energy, and money into doing extensive replications of some concept, is because we assume that psychology is an empirical science (and thus cumulative in nature) and that the concepts we dig up ought to replicate, because that's the most basic requirement of empirical science. If I find some regularity (not a law - there are no laws in psychology), then, presumably, we ought to find it in different populations, in different times, and so on. This is guided by the assumption that there are intrinsic and somewhat stable psychological events and entities within a person, and that we can more or less capture them through labels. But this entire endeavor is misguided a priori. That is because context, as we've learned, isn't extrinsic to a person - it is very much a male angler fish (yes, we’re there again). We can never exist (or survive) without a context. And whatever context people smuggle inside the experiments they participate in is simply irreconcilable from person to person, and from a study to study. Yet, despite this, we bunch people up in our analysis, and compute our statistics with means, medians, or other central tendencies. And then - get this! - we expect our experiments to replicate! BWAHAHA, what a magnificent joke. Yet, we play the “psychology is empirical science game”, so we aim to replicate studies regardless, because that's what the overarching paradigm prescribes.

Anyway, moving on.

Misguided assumption #2 - Context is a nuisance variable

Consider also the golden standard of evidence that psychology (and any other empirical science) can provide - the Randomized Controlled Trial, or RCT for short, where we measure pre-post values of some variable, randomize participants (double-blindly) into two groups where one receives a treatment, and the other one doesn't, introduce some sort of manipulation in the experimental group, and then measure some variable of interest in both groups. Again, even though we assume that this is the highest grade evidence out there, I'd say it is misguided a priori: there are, in way more cases than not, no singular critical factors (the stuff you manipulate in an RCT) that cause psychological events; rather, it's a bunch of weak, interacting, and emergent factors - our dear old friend CONTEXT - that collectively cause psychological events13. And the thing is, even in an immaculately designed experiment, where the researchers try to account for as many context variables as possible, that's still misguided! That is because those are simply just a very few instances of context they are controlling for, an insignificant smidgen. Also, those are only the “known” contextual factors - consider other, seemingly irrelevant factors that nobody cares to control for (also because it's impossible):

Physical Activity Level

Diet and Nutrition

Commute Time

Occupational Demands

Living Situation

Parental Influence

Environmental Awareness

Life Transitions

Cultural Events

Medical Conditions

Spiritual Beliefs

Time of Day

Seasonal Affective Disorder

Urban vs. Rural Residence

This is just from the top of ChatGPTs’ imaginary head. In reality, there’s an endless array of variables!

And, let's just get ridiculous in a purely hypothetical scenario: even if you get there and control for all the variables - A for the effort, by the way (and a pure horror for the participants) - you just shot yourself in the knee: how do you model their influence? Don't tell me... you can't possibly mean... ah, you think they influence your dependent variable in a neat little humanly comprehensible LINEAR fashion? Oh no, A for effort but D for common sense! Of course, these variables will interact in an endless combinatorial explosion. Fingers crossed for a room-temperature ambient-pressure superconductor to perform all these computations.

But let us make this abstract talk a bit more concrete and consider what actually happens when you have misguided assumptions about the role of context in person’s psyche. Spoiler alert: you come out with a completely different theory of how things work!

Emotions are Universal…Or Are They?

Let's say, for the sake of kicks and giggles, that you want to participate in a psychological study. You know, to change things up and help advance science. In this particular situation, you're also Turkish, because that's the random study I found that employed the classic emotion recognition method we're interested in. Slightly confused about your newfound Turkish heritage, but still determined to participate, you settle in front of a screen where you are shown faces like these:

Yes, these are what, 90s faces? Black and white? Sideburns??14 Rad. Anyway, after you've most certainly had your brief moment of time- and culture shock (because, remember, this is 2023, you're Turkish, and this is not how 2023 nor Turkish people look15), you are asked to indicate which of the following options best matches the face shown:

“happiness”

“sadness”

“fear”

“anger”

“surprise”

“disgust”

or “neutral”

So you kinda eyeball whichever word fits the best to the facial expression that's being shown and choose the corresponding answer option. And now for the fun part: you rinse and repeat the process 110 times. Yay. But it's science, so you plod on, determined, because you are a good little (still Turkish) participant. After your valiant effort, you are thanked and dismissed.

Anyway, this would be how 95 % of the emotion recognition experiments apparently look. Most of these experiments deliver nigh same results:

[…] For example, in the most recent major meta-analysis of emotion recognition research, 95% of the included studies used choice-from-array and observed that participants from Western parts of the world (e.g., Germany, France, Italy, etc.) chose the expected word or face about 85% of the time on average; the results were slightly lower (72%) in cultures that are less similar to the United States (e.g., Japan, Malaysia, Ethiopia; Elfenbein & Ambady, 2002).

Now, such findings let us assume that emotions are universal and culturally independent (with only their expressions, and not the emotions themselves, being culturally influenced). But this is, if you believe Barrett's view (and I'm convinced), misguided, because:

there is “context embedded within choice-from-array” experiments, i.e. the way the experiment is set-up (giving you predefined choices and the same faces from 1990s) leads to certain answers.

there is “context hidden within repeated trials”. For instance, experimental trials for a stereotypical emotional expression study are blocked by emotion category, not randomized, leading the participants to link the experimental block to an emotion.

there is also “context hidden in the sampling of facial configurations”. Basically, the 90s faces you’ve seen above are the researchers or the researchers-aligned people themselves, choosing to display some prototypical facial expression. But our faces can do many, many more “faces” than that, and these aren’t considered at all.

(if this isn’t clear - or you want more detail - I encourage you to read the respective section of the paper).

If you account for all these — any many more — issues, you come up with the theory of constructed emotion, where each instance of emotion is contingent upon dozens of contextual factors, purely emergent in any situation, and stemming from allostatic brain processes, not universal and culturally independent.

It’s similar with the current paradigm in psychology — the way we setup our studies, think about them, make inferences, etc. — produces certain kind of results, results that only ever perpetuate the paradigm that gave rise to them. It’s an immortal closed loop that Mastroianni called the “pick a noun and study it” paradigm: you can always pick a noun, study it (i.e. correlate it with something else, see how it predicts another noun, produce a scale for it, etc.) and crucially, get publishable results. That’s often all that matters. But, as argued all throughout, this doesn’t move our understanding further (anymore). We’re stuck with a wrong way of looking at things, one that is disembodied from the context, and one that desperately needs different methods. Let’s discuss that in the last section.

Not Really Helpful Solutions

MIAM is a potent metaphor, but I believe it's time we set it aside. We need more nuanced understanding of human psyche, on that takes context - in its complexity, chaos, and embeddedness within a person - into account. And if this means less quantitative psychology and more qualitative psychology, with its more honest approach to subjectivity and context, I'm all for it. If it also means that modeling and analysis become the main tool we use to excavate knowledge, I'm also for it, and wish good luck and Godspeed to all the statisticians and mathematicians and programmers who will be the only ones who can design and conduct such procedures16. Another idea is the radical acceptance of N = 1 experiments: self-experimenting on yourself, gathering the available data, and making your own conclusions. This, more than anything, is a commonsense way of applying any psychological findings in your own life (or recommending it to others) — since whatever claim is made in any psychological study rests on averages, and often doesn’t replicate, chances are (high) they won't be attuned to the particular smidgen of chaos that is you17.

But again, remember: the increased use and acceptance of qualitative psychology methods, a complete migration from experimentation to analysis and modelling, and somewhat hybrid approaches like N = 1 designs all hinge upon one thing: abandoning the golden goose (MIAM metaphor) and the mechanistic mindset. I mean, I’m generally pessimistic, but there are precedents18, so I'm not entirely without hope.

Other people have written that psychology, unlike other sciences, doesn't have a single castle ( = a single guiding theory) in the sand: we have many disparate ones, which possibly reflects the plurality and complexity of the subject matter - humans. My hope isn't that we somehow become physics. My hope is that we finally accept the field of psychology for what it actually is: a qualitative investigation of human psyche, not an empirical science. Accepting that, I believe, will finally make us progress beyond our measly 42 % of explained variance.

and people argue that this is a general finding across disciplines, see here. Nevertheless, we barge on right through this technicality and assume the worst for psychology.

see here for some replications and reversals (i.e. a replication produced a reversed effect than the original one.

Open Science sounds more professional than "Fuck Off, Jake, Get Off Our Methods" Initiative. But make no mistake, that's what's actually meant.

there's always tons of degrees of freedom in any psychological study and with so much freedom comes the freedom to confirm one's beliefs. See here for how different researchers come to completely contrary conclusions with the same data.

e.g. the case with Francesca Gino could only come to light because of the shift in mindset how data are handled; prior to Open Science movement, nobody bothered to post any data publicly. They were, at best, available at request. This, coupled with 3 guys apparently having too much time on their hands to twiddle with weird-looking excel sheets, results in an in-depth investigation and a court case.

despite the lower number, this is good - it shows that studies also fail to find what they seek, so we can correct course in the next studies. Without this, more garbage would just be produced, since nobody's gonna track some random unpublished literature they can't even conceive of. Here’s the study behind the numbers.

i.e. pitting two theories against each other and picking the one that supports the data best. But then again, this requires for us to actually have testable theories, which we don’t. We have mostly ordinal, verbal theories that lack any information about the expected magnitude of effect or contexts in which they apply (= weak, untestable theories). More on that here. Here I am unsure as to how adopted this practice already is, if at all - my sense is it isn’t.

but is very important in sating my desire to mention DYSON AIRBLADE in all caps, so I'm leaving it in.

some other important assumption I will not be discussing is this: psychological events or entities can be captured and operationalized through items and categorized into neat little labels, like "motivation", and "emotion", and "ego depletion" (which lets us experiment on them).

For an entire a book on that topic, I recommend The Biological Mind by Alan Jasanoff.

because I’m not a monster to spoil you with a video of that!

of course, there are situations - and not a few - where we can argue that some factor is meaningfully relevant and causal to some psychological event or entity, I’m not denying that.

93, almost as old as me. And you actually need to pay 399 $ to Paul Ekman to use these! Hope I’m fine linking a study that used them. I should be. God I hope I am - I’m terrified of the American court system more than anything else in the world.

here, let me solve the conundrum for you as to why it's 90s psychologists still use in their studies: it is because they have been extensively validated and are reliable. Psychology likes validated and reliable measures for various reasons, so this is why you might deal with slightly outdated-looking material, because validating and realiabilizing (a new word has been Christened, all hail!) new ones is a bitch.

yes, I've sense that psychology will - or already has - become a field for applied mathematics and programming

head over to Slime Mold Time Mold for more knowledge about N = 1 experiments.

This is quite good (I hope everyone makes the effort to read this). I have vaguely sensed the importance of context (e.g. I am happier in a small village than an urban area). I want to self-experiment with altering the context of my life by conscious choice. Any suggestions for how to approach this?